How We Collect Domain Names For Our Datasets

As you may already know, there is no easy way to get all registered domain names. Most of the registrars (especially ccTLD) don’t provide access to their zone files. When we faced a problem of obtaining lists of all available domain names from The Entire Internet, we started researching and had finished with creating our set of Big Data Tools to collect all possible existing domains.

Now we use a combination of reverse engineering, crawling and obtaining data from third-party providers such as CommonCrawl.

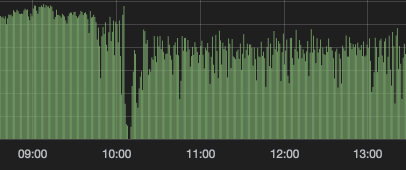

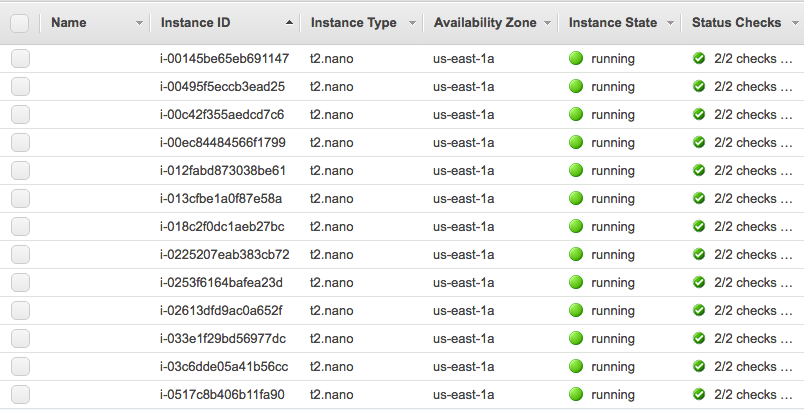

For crawling and reverse engineering, we draw on data obtained from AWS Cloud with plenty of EC2 instances.

Our internal database updates daily and we release new datasets each month.

We provide not just plain domain names files, but updated, verified and enriched datasets you could use for Data Mining or Web Scraping purposes or could sort them depending on your goals.

Domains Index Datasets are CSV files that contain those columns:

- Domain is obviously a domain name;

- Date added is a date when domain comes into our database first time;

- NS Servers means the list of 2 or more NS servers for this domain returned at our last NS check;

- IP address is an IP address from a domain’s A record. A record is also called Address Record or sometimes Host Record. A record only resolves to IP addresses. This record points your domain to the IP address of your website or hosting.

- Country is a Country ISO code, where domain hosted according to A record IP address and GeoIP database.

With this data, you can stay in the flow of your analysis instead of worrying about the freshness of your data.

We are excited to see you searching information via Domains Index and would love to hear how you use that data. Please, reach out us with any feedback or requests so that we can continue to add new informative data that you are interested!